Insight

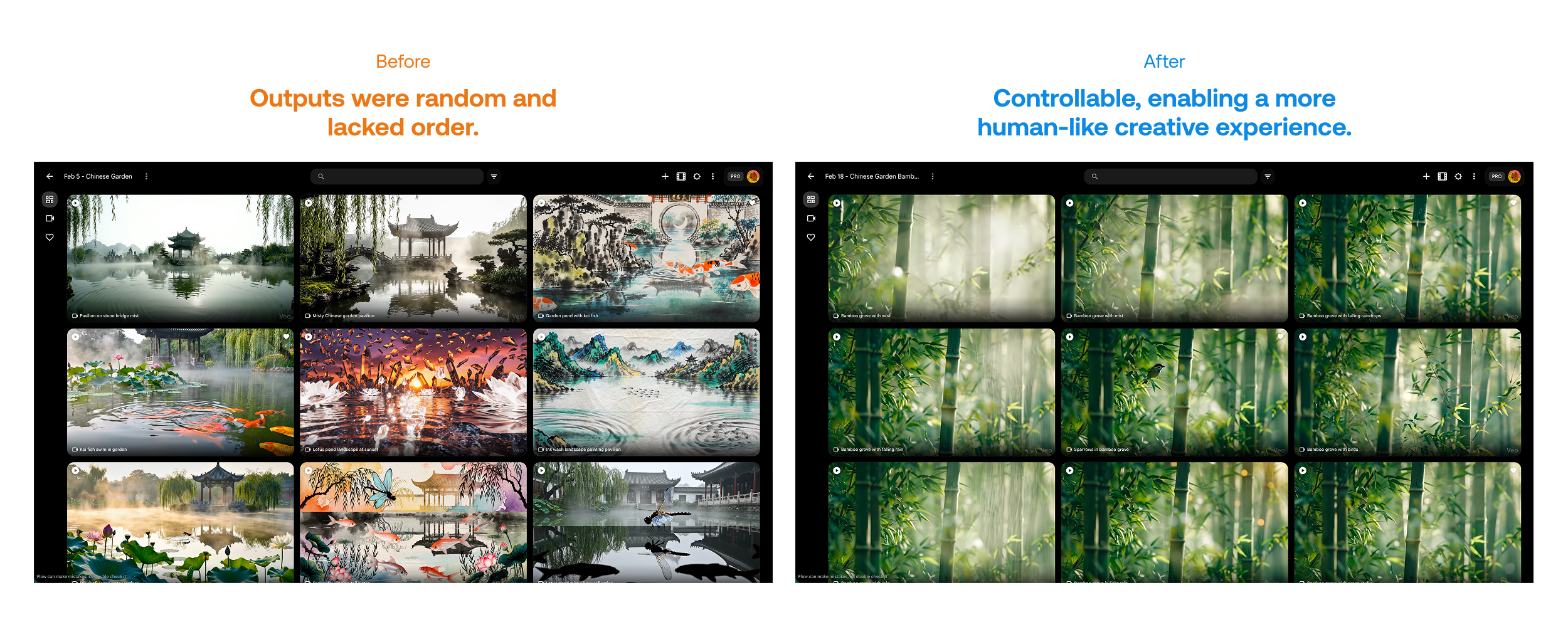

Digital controllability defines the next era of comfort

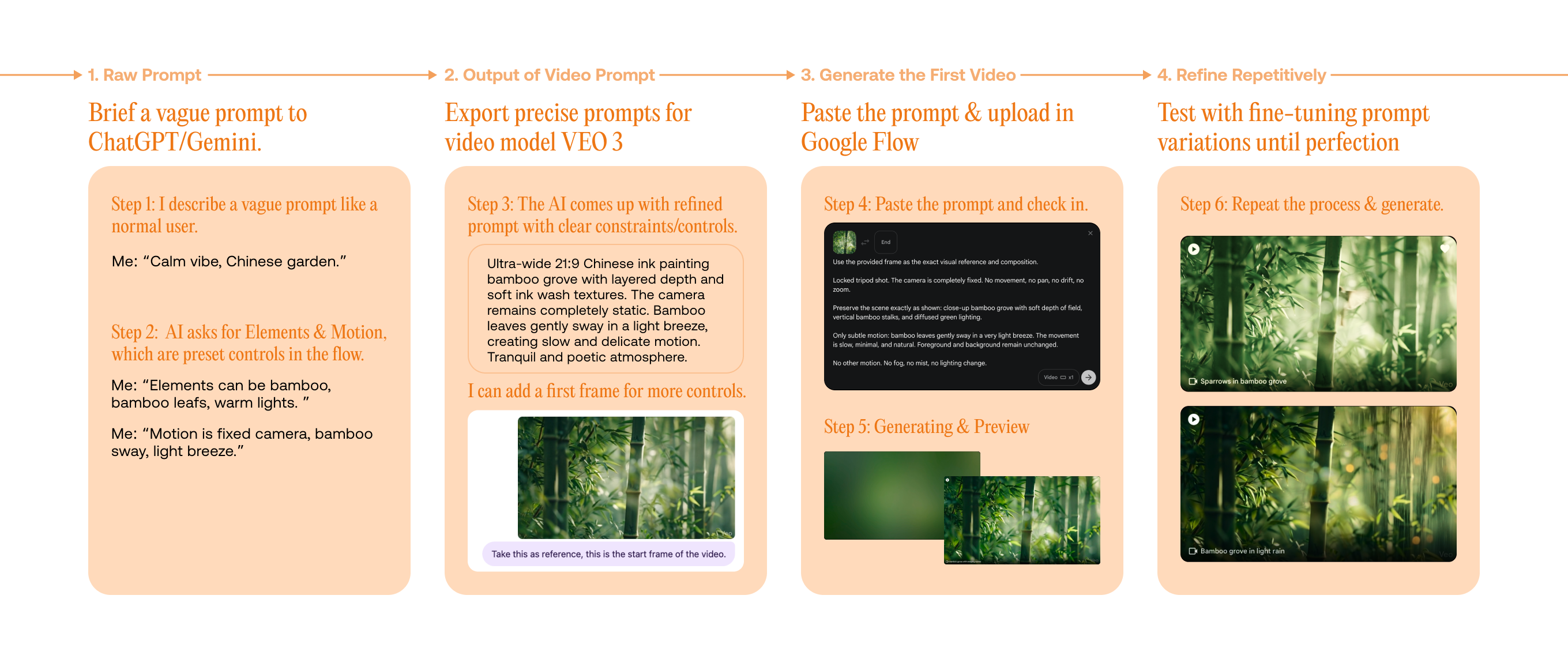

As intelligent cabins evolve, the vehicle becomes a space where people choose how to spend their time to relax, explore, or stay efficient. With the advancement of AI, experiences can now be generated and adjusted in real time, allowing each passenger to shape their own activities and environment during the ride.

In this context, the ability to control and customize digital experiences defines the next era of comfort.