Insight

Digital controllability defines the next era of comfort

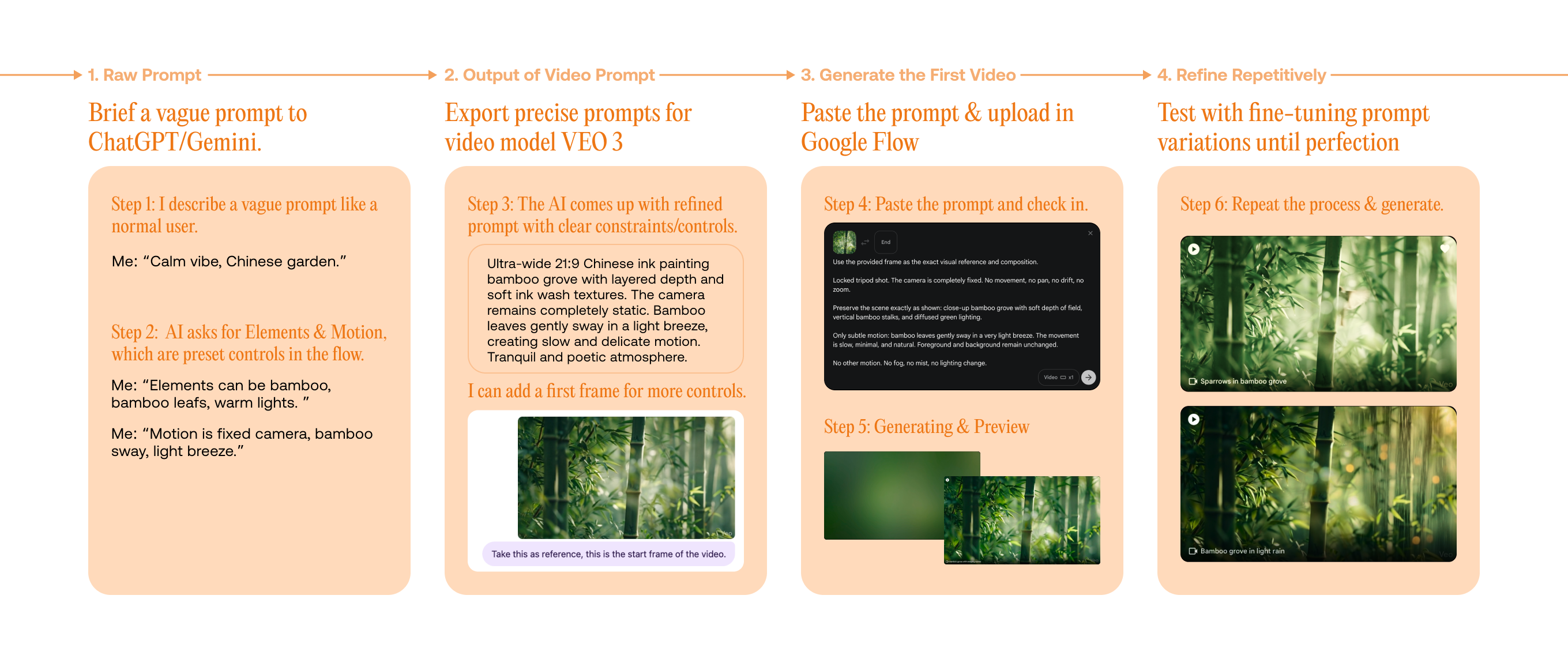

I observed that AI is rapidly gaining the ability to generate images, videos, and even functional apps within seconds. Meanwhile, ride time is evolving to lead a new lifestyle — a space for relaxation, exploration, or planning ahead. This shift revealed an opportunity for experiences to be generated on demand, while passengers remain free to customize what they use during the ride.